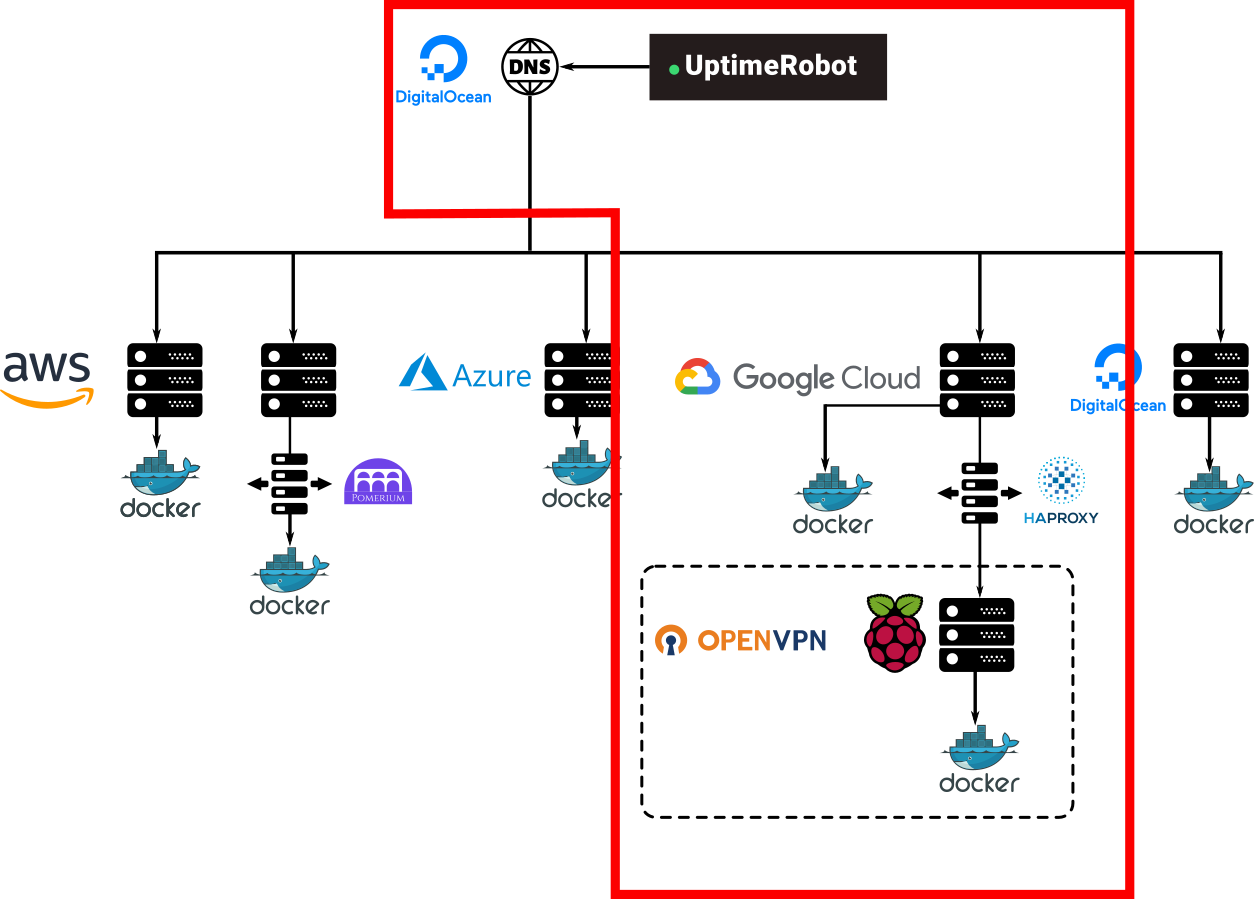

Not so much time ago I wrote a post on my personal infrastructure, as it has been evolving since it was an old laptop running Nginx at home and that evolution is reflected in the way it is structured, it has been changing for years alongside my knowledge about a lot of technologies, I learned about Docker, Terraform, VPNs or Ansible when it was already built and that is reflected on its heterogeneous shape.

Self-hosting

In the beginning, I focused on the availability since I was moving and I wouldn't be able to physically access the laptop anymore, so I put a focus on VPS and migrated everything to different cloud providers, then it came Dockerization, Terraformation, and so.

Recently, I decided to focus again on self-hosting, the two main reasons for doing so is to decrease my cloud providers bill which at this point is starting to be a bit worrisome, and also to keep my own data private, I believe in a world in which your data is not owned by some big corporation and want to be active about it.

To do so I did set up an OpenVPN server in one of the cloud instances I owned and experimented with some Raspberry Pi's to have a hybrid approach, some applications (Docker containers) are served directly on the VPS instance but others live in the Raspberry Pi.

This worked and I could reach app1.mydomain.com or app2.mydomain.com and it would be the same, regardless of being a container running on the Google Cloud instance or the Raspberry Pi. Also, after spending a lot of time troubleshooting different issues with OpenVPN I can say I gained enough experience with it not to have availability issues anymore.

Microservices and authentication Proxy

During this time my way of designing the architecture of applications also changed, I went from being a PHP developer to a Python developer and also gained interest in microservices, so the newer applications I was writing were different and required a different setup. The latest set of applications I made was using FastAPI and more importantly, these applications share some services like a Notifications Service for Telegram, or the authentication that is delegated to Pomerium. This was a big mindset change for me before I was conditioned by the way I was writing docker-compose files, one application will consist of one app container, one database container, one Redis container, etc... and I was replicating this on the production infrastructure, which was very app-oriented but also was much more expensive since I was running one database docker container for each database and I could be sharing these and saving some money.

Another reason to start thinking about this redesign was my experience using Pomerium for the new applications I made, delegating authentication to a proxy saved a lot of headaches for me and my "users" and naturally wanted to put everything behind this proxy.

So now my idea is to build this homogeneous network of containers all interconnected using OpenVPN regardless of them being on Google Cloud, AWS, Azure, or a Raspberry Pi at home, and I want most (but not all of them) password protected using Google Accounts.

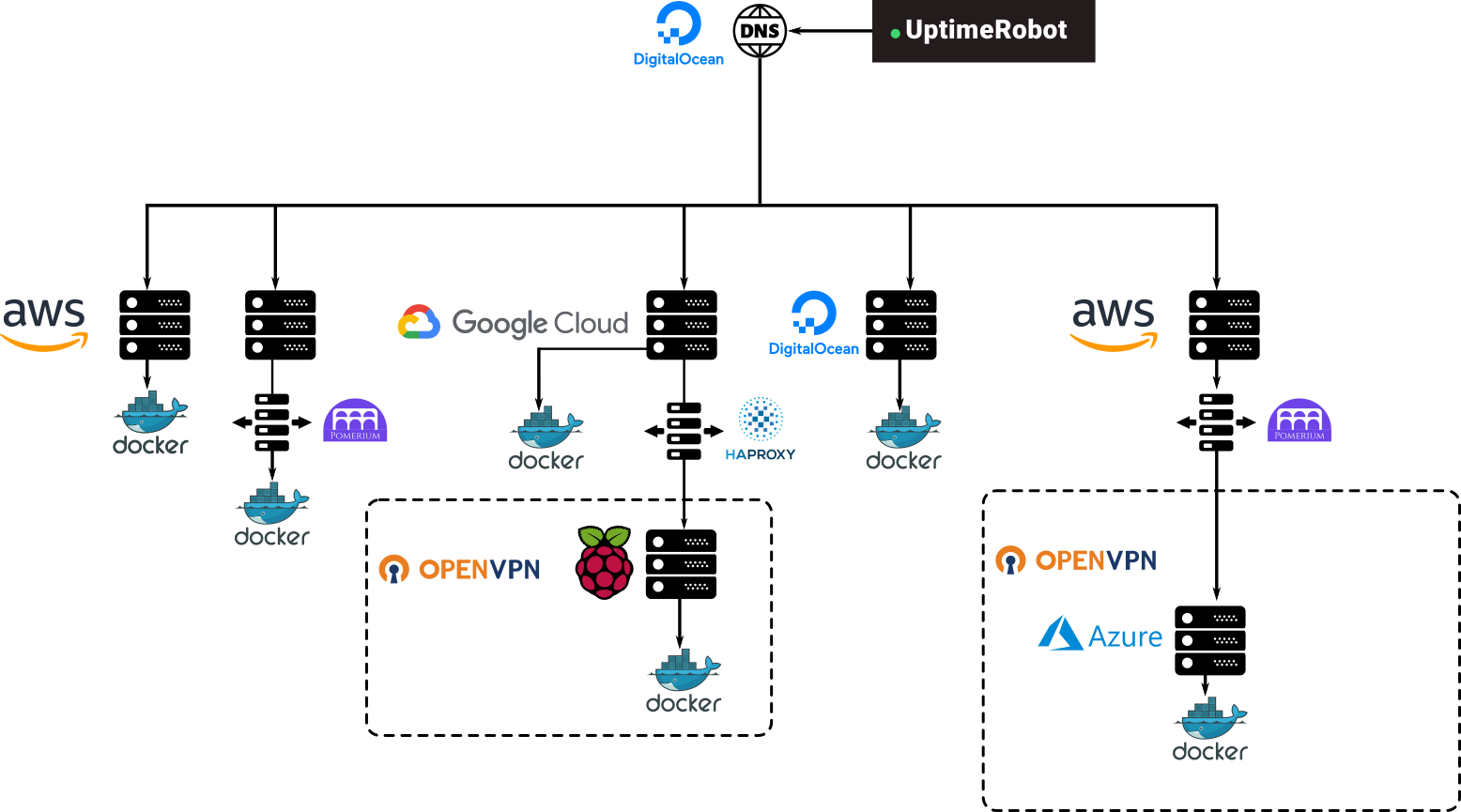

New VPS instance as a gate

The first successful step I took yesterday was making a new VPS instance that will act as a gate, all DNS records of all applications will eventually point to this gate and the only purpose of this instance will be authorizing and redirecting, it also runs an OpenVPN server so all other instances that are connected to it and will receive traffic are OpenVPN clients. Another advantage is that now HTTPS traffic and certification happens only from the outside to the gate, and once past the gate, all traffic is just HTTP. So certificate management is also centralized at one point.

Yesterday I was able to successfully create and configure the gate instance and then move the Azure instance that is running Kimai and Mealie behind to make both applications protected by a Google Account and reachable only through this gate instance, the new infrastructure then looks something like this.

And the idea is to progressively move everything to that single authorized OpenVPN network. That is solving some of the problems but not all, I still would like to solve the interconnection issue, I still don't know how easy would it be for a container to be able to reach the notifications service of another container of the network so it can also use its services but... that's a problem for future me and would be in a future blog post.

As always you can always see the updated code of the orchestration of the whole infrastructure on:

And even clone it and replicate it yourself entirely or the parts of it you are interested in. Everything I do is public and open-source. Also if you are interested in a more detailed explanation of some of the problems I had to face when configuring this, I have a bunch of personal notes I wrote along the process but these are not structured enough for making a blog post, but if there is interest on it I can take some time to write some things properly.